Difference between revisions of "AviSynth"

From SDA Knowledge Base

Ballofsnow (Talk | contribs) m (→Part 6: Trimming) |

Manocheese (Talk | contribs) (→Determining the resolution of the game: Added link to the DF page) |

||

| (92 intermediate revisions by 4 users not shown) | |||

| Line 1: | Line 1: | ||

==Introduction== | ==Introduction== | ||

| − | + | IMPORTANT NOTE: AviSynth scripting is an advanced topic. All beginners are recommended to use [[Anri-chan]] instead. | |

| − | Let's say you're still using | + | To put it briefly, AviSynth is a video editor like VirtualDub except everything is done with scripts. For details, check [http://en.wikipedia.org/wiki/AviSynth Wikipedia]. The most important thing to learn right now is the concept of AviSynth. |

| + | |||

| + | Let's say you're still using VirtualDub: | ||

*You go through the menu or drag and drop your source video inside the program. | *You go through the menu or drag and drop your source video inside the program. | ||

| Line 11: | Line 13: | ||

*Resizing has made the picture blurry, so you add the sharpen filter. | *Resizing has made the picture blurry, so you add the sharpen filter. | ||

| − | + | You did all that by moving your mouse, going through menus, etc. With AviSynth you're creating a text-based file (.avs) to tell it what to do with text commands. The above would be, as an example: | |

*avisource("myvideo.avi") | *avisource("myvideo.avi") | ||

| Line 19: | Line 21: | ||

*XSharpen(30,40) | *XSharpen(30,40) | ||

| − | You save the avs file, and load that into | + | You save the avs file, and load that into MeGUI or VirtualDub for final compression. The advantages are that you don't have go through as many menus, you don't have to remember which frames you want to cut out, you have access to more advanced deinterlacing filters like mvbob, and you can keep your scripts forever so that you don't have to start from scratch in case you want to re-encode them later. |

===How to use this guide.=== | ===How to use this guide.=== | ||

| − | Yes, | + | Yes, AviSynth can be confusing and hard to learn, but it is very rewarding once you get the hang of it. I suggest you look at the sample scripts at the bottom of the page to get an idea of what a final script looks like. Then go through each section putting whatever you need into your own script. If you are having trouble, <i>and you probably will</i> >:), do not hesitate to ask for help in the [http://speeddemosarchive.com/forum/index.php/board,5.0.html Tech Support forum]. |

<br> | <br> | ||

| + | |||

==Installation / plugins== | ==Installation / plugins== | ||

| − | Go to http://www.avisynth.org/ or http://sourceforge.net/project/showfiles.php?group_id=57023 | + | Go to http://www.avisynth.org/ or http://sourceforge.net/project/showfiles.php?group_id=57023 to download AviSynth 2.5.7. Note that Part 11: SDA StatID cannot be completed with version 2.5.6 or lower. |

| + | |||

| + | Download these necessary [[Media:avisynthplugins.zip|avisynth plugins]]. | ||

| − | With | + | With AviSynth installed, go to Start menu -> [All] Programs -> AviSynth -> Plugin Directory. This will open the directory where AviSynth stores its plugins. Copy the files from inside the AviSynth plugins zip file to the Avisynth plugins directory window you just opened. Note that you can't copy the directory you unzipped in; you have to copy the files <i>inside</i> the directory you unzipped into the AviSynth plugins directory. This is because AviSynth won't recognize the plugins if they are inside a directory inside the AviSynth plugins directory. |

| + | If AviSynth complains that the MSVCR71.dll file is missing, you can download it at http://www.dll-files.com/. Place it in your "C:\WINDOWS\SYSTEM32\" directory. | ||

<br> | <br> | ||

| − | |||

| − | + | You'll also want to have [http://www.virtualdub.org/ VirtualDub] to test your script as you go along. | |

| − | Create a .txt document with a name of your choice. Rename the extension from .txt to .avs. If you can't see the extension and are running | + | ==The AviSynth script== |

| + | |||

| + | Create a .txt document with a name of your choice in the same folder as your source files. Rename the extension from .txt to .avs. If you can't see the extension and are running Windows, open Windows Explorer, go to Tools -> Folder Options -> View and uncheck "Hide extensions for known filetypes". | ||

Open the avs file in Notepad or any text editor. | Open the avs file in Notepad or any text editor. | ||

| + | |||

| + | |||

| + | <font color="green"><b>Tip:</b> You should create separate AviSynth scripts for each quality version that has differences between them. There's no sense in creating one AviSynth script, encoding it, editing the script, encoding again, etc. With separate AviSynth scripts you'll get less script errors and less headaches, and you'll be able to queue many encodes.</font> | ||

<br> | <br> | ||

===Part 1: Loading the plugins=== | ===Part 1: Loading the plugins=== | ||

| − | + | AviSynth will automatically load any dll and avsi files located in the plugins directory. If you're working with DVD source material, the only plugin that I recommend you load manually is DGDecode.dll since it's in its own folder. You can of course just copy and paste it into the plugins folder, but be sure to re-copy it if you update DGMPGDec. Change the file path if needed. | |

<pre><nowiki> | <pre><nowiki> | ||

| − | Loadplugin("C:\Program Files\DGMPGDec\DGDecode.dll") | + | Loadplugin("C:\Program Files\DGMPGDec\DGDecode.dll") # For DVD source |

| − | + | ||

| − | + | #### For MvBob deinterlacing. Remove the # to load it. | |

| − | + | #import("C:\Program Files\AviSynth 2.5\plugins\mvbob.avs") | |

| − | + | ||

</nowiki></pre> | </nowiki></pre> | ||

<br> | <br> | ||

| + | |||

===Part 2: Loading the source files=== | ===Part 2: Loading the source files=== | ||

| Line 66: | Line 76: | ||

* AudioDub(video, sound) | * AudioDub(video, sound) | ||

| − | + | If you used a <b>capture card</b> or <b>screen capture software (e.g., Fraps, Camtasia)</b> then it is quite simple to load the files. If avisource does not work, try directshowsource. If you are using Camtasia and chose to use its own Techsmith codec, then use directshowsource. | |

| − | + | ||

| − | + | ||

| − | + | ||

| − | If you used a <b>capture card</b> or <b>screen capture software</b> then it is quite simple to load the files. If avisource does not work, try directshowsource. | + | |

<pre><nowiki> | <pre><nowiki> | ||

### Video and audio are already combined | ### Video and audio are already combined | ||

| − | avisource(" | + | avisource("video.avi") |

### Video and audio are split | ### Video and audio are split | ||

| − | #AudioDub(avisource(" | + | #AudioDub(avisource("video.avi"), wavsource("audio.wav")) |

</nowiki></pre> | </nowiki></pre> | ||

| − | Notice the # before AudioDub. This is telling | + | Notice the # before AudioDub. This is telling AviSynth to skip over the line. If you need to use this line, remove the # and add one before the first avisource command. |

| + | |||

| + | <font color="green"><b>Tip:</b> If your avs script file is in a different folder than your source files, just use relative or absolute paths, like "c:\my work folder\video.avi".</font> | ||

<font color="green"><b>Tip:</b> If your video is separated in multiple parts, which is usually the case when recording with Fraps, then be sure to look at part 4 since it is linked to part 2.</font> | <font color="green"><b>Tip:</b> If your video is separated in multiple parts, which is usually the case when recording with Fraps, then be sure to look at part 4 since it is linked to part 2.</font> | ||

| Line 85: | Line 93: | ||

If you used a <b>DVD recorder</b> then your video and audio is most likely split. Make sure you've gone over the [[DVD|DVD page]] before continuing. | If you used a <b>DVD recorder</b> then your video and audio is most likely split. Make sure you've gone over the [[DVD|DVD page]] before continuing. | ||

<pre><nowiki> | <pre><nowiki> | ||

| − | AC3source(MPEG2source(" | + | AC3source(MPEG2source("vob.d2v",upConv=1),"vob T01 2_0ch 192Kbps DELAY -66ms.ac3") |

| − | #AudioDub(MPEG2source(" | + | #AudioDub(MPEG2source("vob.d2v",upConv=1),MPASource("vob T01 2_0ch 192Kbps DELAY -66ms.mpa")) |

</nowiki></pre> | </nowiki></pre> | ||

I hope you haven't removed the delay information from the sound files. Not that it's the end of the world if you did remove it, you'll just have to listen by ear until you get a close value with DelayAudio(). | I hope you haven't removed the delay information from the sound files. Not that it's the end of the world if you did remove it, you'll just have to listen by ear until you get a close value with DelayAudio(). | ||

<br> | <br> | ||

| + | |||

===Part 3: Fixing audio delay=== | ===Part 3: Fixing audio delay=== | ||

| − | + | ====Constant audio desync==== | |

| + | |||

| + | Constant desync is when the desync at the beginning of the video is the same as the desync at the end of the video. | ||

The DelayAudio command is straightforward, but there is an extra concept worth learning. Look at the following two scripts: | The DelayAudio command is straightforward, but there is an extra concept worth learning. Look at the following two scripts: | ||

<pre><nowiki> | <pre><nowiki> | ||

| − | Ac3Source(MPEG2source("vob.d2v"),"vob DELAY -66ms.ac3") | + | Ac3Source(MPEG2source("vob.d2v",upConv=1),"vob DELAY -66ms.ac3") |

DelayAudio(-0.066) | DelayAudio(-0.066) | ||

</nowiki></pre> | </nowiki></pre> | ||

<pre><nowiki> | <pre><nowiki> | ||

| − | Ac3Source(MPEG2source("vob.d2v"),"vob DELAY -66ms.ac3").DelayAudio(-0.066) | + | Ac3Source(MPEG2source("vob.d2v",upConv=1),"vob DELAY -66ms.ac3").DelayAudio(-0.066) |

</nowiki></pre> | </nowiki></pre> | ||

Is there a difference between them? Yes and no. They both get the same result, however the script with one line makes it easier for projects where you append files together. I suggest using the format of the second script. | Is there a difference between them? Yes and no. They both get the same result, however the script with one line makes it easier for projects where you append files together. I suggest using the format of the second script. | ||

| + | |||

| + | ====Progressive audio desync==== | ||

| + | |||

| + | Progressive (gradual) desync is when the desync at the beginning of the video is <i>not</i> the same as the desync at the end of the video. For example, the audio seems fine but then later on someone fires his gun and you hear the bang a second later. This type of desync can be hard to fix since you usually have to figure out the correct desync value manually. <font color="red">THE DESYNC MUST BE GRADUAL!</font> Do not apply to audio that has an abrupt desync somewhere along the timeline. | ||

| + | |||

| + | <pre><nowiki> | ||

| + | #Positive value stretches audio, negative value shrinks audio. Value in milliseconds. | ||

| + | stretch=-300 | ||

| + | #Do not change the line below. | ||

| + | TimeStretch(tempo=(last.AudioLengthF/(last.AudioLengthF+(stretch*(last.audiorate/1000)))*100)) | ||

| + | </nowiki></pre> | ||

| + | |||

| + | <font color="green"><b>Tip:</b> [[General_advice#Preview_avisynth_scripts_in_Media_Player_Classic|Preview avisynth scripts in Media Player Classic]]</font> | ||

<br> | <br> | ||

| + | |||

===Part 4: Appending=== | ===Part 4: Appending=== | ||

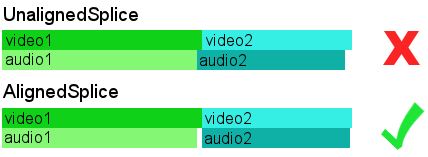

| − | One of the best features of | + | One of the best features of AviSynth is its ability to do an aligned splice when appending video. There is usually a mismatch between the length of the video and the length of the audio, typically ranging from -50 ms to +50 ms. <b>This means that appending files in VirtualDub(Mod) will in almost all cases cause a desync</b> because the audio of clip2 will be appended right after clip1. VirtualDub(Mod) is unable to do an aligned splice. Here is an illustration: |

[[Image:append.png]] | [[Image:append.png]] | ||

| Line 125: | Line 150: | ||

### Method 3 | ### Method 3 | ||

| − | avisource("clip1.avi")++avisource("clip2.avi")++avisource("clip3.avi" | + | avisource("clip1.avi")++avisource("clip2.avi")++avisource("clip3.avi") |

| − | Ac3Source(MPEG2source("vob1.d2v"),"vob1 DELAY -66ms.ac3").DelayAudio(-0.066)++Ac3Source(MPEG2source("vob2.d2v"),"vob2 DELAY -30ms.ac3").DelayAudio(-0.030) | + | Ac3Source(MPEG2source("vob1.d2v",upConv=1),"vob1 DELAY -66ms.ac3").DelayAudio(-0.066)++Ac3Source(MPEG2source("vob2.d2v",upConv=1),"vob2 DELAY -30ms.ac3").DelayAudio(-0.030) |

### Method 4 | ### Method 4 | ||

| Line 133: | Line 158: | ||

</nowiki></pre> | </nowiki></pre> | ||

| − | Method 2 is good for when you're joining a lot of clips since it's easier to edit. Notice the double plus signs in method 3 | + | Method 2 is good for when you're joining a lot of clips since it's easier to edit. Notice the double plus signs in method 3; this is the same as AlignedSplice. One plus sign would indicate UnalignedSplice. Method 4 is to join independent AviSynth scripts. |

<br> | <br> | ||

| + | |||

===Part 5: Deinterlacing / Full framerate video=== | ===Part 5: Deinterlacing / Full framerate video=== | ||

| − | + | Deinterlacing isn't needed for video that is not interlaced such as computer games recorded with Fraps/Camtasia, or if your console game outputs in progressive mode and you captured in progressive mode as well. Otherwise, if your footage looks anything like the picture below with the horizontal lines, then you definitely need to deinterlace. | |

| + | |||

| + | <font color="green"><b>Tip:</b> The SelectEven() command used in this section is still useful for those with beefy computers who recorded their computer game at 60 fps. The command will get you half framerate (30 fps) needed for LQ/MQ.</font> | ||

| + | |||

[[Image:dmc3interlaced.jpg]] | [[Image:dmc3interlaced.jpg]] | ||

| + | <br> | ||

| − | + | ====What is deinterlacing?==== | |

| − | < | + | It's important that you understand <i>why</i> you'll be using whichever deinterlacing method is needed for the video you recorded. Since I'm lazy, I'll briefly explain what deinterlacing does. |

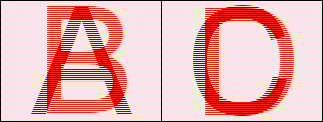

| − | + | An interlaced frame has two fields. Think of it as a single frame with two smaller frames inside. Those two fields (the smaller frames) represent two different moments in time. Take the picture as an example, with four different fields, A,B,C,D: | |

| − | + | ||

| − | + | ||

| − | + | ||

| − | This is | + | [[Image:abcd_interlaced.png]] |

| + | |||

| + | When deinterlaced by separating the fields, we get: | ||

| + | |||

| + | [[Image:abcd_deinterlaced.png]] | ||

| + | |||

| + | This is how video that has been recorded at 29.97 fps interlaced can be deinterlaced to achieve 59.94 fps progressive. | ||

| + | |||

| + | You may be wondering: "doesn't splitting the fields squish the video?". Well, yes, but it's not a problem for low resolution video since we can just resize it horizontally. For high resolution, experts out there have created filters like telecide and mvbob to reconstruct the height. | ||

| + | |||

| + | Here's where it gets a little more complicated... Some games only run at half framerate (or even lower) but are still interlaced. So, instead of A/B,C/D becoming A,B,C,D for full framerate you get A/A,B/B becoming A,A,B,B. Obviously, there's no point in keeping the duplicate frames. These games are usually very easy to deinterlace with good results, but you have to learn how to determine the framerate of your game. | ||

| + | |||

| + | <br> | ||

| + | |||

| + | ====Determining the framerate of the game==== | ||

| + | |||

| + | First, check to see if your game is listed on [[DF|this page]]. If it's not there, read on. | ||

| + | |||

| + | By this point in the guide, you should have a script similar to this: | ||

<pre><nowiki> | <pre><nowiki> | ||

| − | + | Loadplugin("C:\Program Files\DGMPGDec\DGDecode.dll") | |

| − | + | ||

| + | Ac3Source(MPEG2source("segment1.d2v",upConv=1),"segment1 192Kbps DELAY -48ms.ac3").DelayAudio(-0.048) | ||

</nowiki></pre> | </nowiki></pre> | ||

| − | <b> | + | Add <b>SeparateFields()</b> at the end and save your script. Load this script into VirtualDub(Mod). You'll notice that the video has been squished vertically, don't worry about this. |

| − | + | ||

| − | + | To make it easier for you, move to a part of your video that has a lot of motion. Also make sure you're looking at actual gameplay, not a cutscene, since gameplay is much more important for speed runs. Use the buttons at the bottom of VirtualDub(Mod) to step through your video frame by frame. What you're looking for is difference in motion. If you see unique motion every single frame, then your game is full framerate. If you see unique motion every two frames, the game is half framerate. If it's every three frames, you've got one-third framerate. | |

| + | For example, if U=unique and D=duplicate, you can write full framerate as a sequence of frames U,U,U,U,etc, and you can write half framerate as a sequence of frames U,D,U,D,etc. | ||

| + | |||

| + | There are some games that have a strange sequence of frames. We recently saw one in Tech Support for a PAL game that had a sequence of frames U,D,U,U,D,etc. If you get something strange like that, make a topic in Tech Support about it so we can look at it. | ||

| + | |||

| + | Before leaving this section, make sure you've determined whether your game is F1 (full framerate), F2 (half framerate) or F3 (third framerate). | ||

| + | |||

| + | Remove <b>SeparateFields()</b> from the script. | ||

| + | |||

| + | <br> | ||

| + | |||

| + | ====Determining the resolution of the game==== | ||

| + | |||

| + | <font color="green">If you're unfortunate enough to have recorded your game at a low resolution, then this section probably doesn't matter.</font> | ||

| + | |||

| + | First, check to see if your game is listed on [[DF|this page]]. If it's not there, read on. | ||

| + | |||

| + | This section requires a bit of your own research. [http://en.wikipedia.org/wiki/ Wikipedia] is a good place to start. Older consoles like the NES and Sega Genesis can only output in low resolution, however the SNES, N64 and PS1 are capable of high resolution. From the PS2 and onwards, high resolution for games is more probable. | ||

| + | |||

| + | http://www.google.com | ||

| + | |||

| + | <br> | ||

| + | |||

| + | ====Deinterlacing==== | ||

| + | |||

| + | Refer to the [[Glossary of terms]] if needed. | ||

| + | |||

| + | Now that you know the framerate and resolution of your game, you can do some actual deinterlacing. Note that there are limitations on the quality versions that SDA produces. | ||

| + | |||

| + | * LQ for Xvid/H.264 must be half or third framerate, low resolution. | ||

| + | * MQ for Xvid must be half or third framerate, low resolution. | ||

| + | * MQ for H.264 can be any framerate you want, low resolution. | ||

| + | * HQ/IQ for Xvid/H.264 must be full framerate (if available), high resolution (if available). | ||

| + | |||

| + | Ask yourself which one you want to do, then follow the appropriate link/section: | ||

| + | |||

| + | [[AviSynth#F1_-_Full_framerate_-_high_resolution |F1 - Full framerate - high resolution]]<br> | ||

| + | [[AviSynth#F1_-_Full_framerate_-_low_resolution |F1 - Full framerate - low resolution]]<br> | ||

| + | [[AviSynth#F2_-_Half_framerate_-_high_resolution |F2 - Half framerate - high resolution]]<br> | ||

| + | [[AviSynth#F2_-_Half_framerate_-_low_resolution |F2 - Half framerate - low resolution]]<br> | ||

| + | [[AviSynth#F3_-_One_third_framerate |F3 - One third framerate]] | ||

| + | |||

| + | <br> | ||

| + | |||

| + | =====F1 - Full framerate - high resolution===== | ||

| + | |||

| + | For full resolution deinterlacing leakkerneldeint or mvbob come into play. If you notice too many "lines," or interlacing artifacts, as we like to call them, then lower the threshold value. The negative effect to lowering this is that you end up with more jaggedy edges, and loss of details. Use what you think is best. | ||

| + | |||

| + | <pre><nowiki> | ||

| + | ### You may have to set order to 0. Try both to see which one works. | ||

| + | LeakKernelBob(order=1,threshold=10,sharp=true,twoway=true,map=false) | ||

| + | </nowiki></pre> | ||

| + | |||

| + | Those who want more quality at the cost of encoding speed can use mvbob instead of leakkernelbob. Nate uses this himself, so if you want to go the SDA way, go with mvbob. Beware; this thing is very, very slow. But it comes with a free Frogurt! You may also want to read this [http://speeddemosarchive.com/kb/index.php/General_advice#Save_time_with_MvBob tip] from General Advice to save time. | ||

| + | |||

| + | <pre><nowiki> | ||

| + | ### Use complementparity if the motion of the video seems to go back and forth. | ||

| + | #ComplementParity() | ||

| + | ConvertToYUY2() | ||

| + | mvbob() | ||

| + | </nowiki></pre> | ||

| + | |||

| + | Here are two pictures that show the difference between mvbob and LeakKernelBob. Loading the pages in two tabs and switching back and forth is recommended. | ||

| + | |||

| + | [http://speeddemosarchive.com/w/images/7/7e/Mvbob.PNG mvbob] | ||

| + | |||

| + | [http://speeddemosarchive.com/w/images/b/bf/LeakKernelBob.PNG LeakKernelBob] | ||

| + | |||

| + | Note how LeakKernelBob tends to create artifacts near edges. | ||

| + | |||

| + | <br> | ||

| + | |||

| + | =====F1 - Full framerate - low resolution===== | ||

| + | |||

| + | Method 1 - Pros: Easy and fast. Cons: You'll notice a bobbing effect which is unpleasant to the eye. Harder for the encoder, bitrate usage is higher. | ||

<pre><nowiki> | <pre><nowiki> | ||

### Use complementparity if the motion of the video seems to go back and forth. | ### Use complementparity if the motion of the video seems to go back and forth. | ||

| Line 170: | Line 289: | ||

</nowiki></pre> | </nowiki></pre> | ||

| − | + | Method 2 - Pros: Zero bobbing. Great for the encoder, bitrate usage is lower. Cons: Slightly blurry image from resizing. Slow because of the mvbob filter (don't even think about using leakkernelbob). | |

| + | <pre><nowiki> | ||

| + | ### Use complementparity if the motion of the video seems to go back and forth. | ||

| + | #ComplementParity() | ||

| + | mvbob() | ||

| + | (RESIZE FILTER GOES HERE, check part 7) | ||

| + | (SHARPENING FILTER GOES HERE, check part 8) | ||

| + | </nowiki></pre> | ||

| + | Method 2 is commonly used for Game Boy footage. Sharpening is probably a good idea, too, but don't go overboard. | ||

| + | |||

| + | |||

| + | Method 3 - This method eliminates bobbing by shifting fields. It is much faster than method 2, but may not work as well for some types of video. Try both on a sample and if the difference is unnoticeable, use this method. | ||

<pre><nowiki> | <pre><nowiki> | ||

| − | + | separatefields() | |

| − | + | converttorgb32 | |

| + | clip1=SelectEven.Crop(0,0,0,last.height).AddBorders(0,0,0,0) | ||

| + | clip2=SelectOdd.Crop(0,0,0,last.height-1).AddBorders(0,1,0,0) | ||

| + | Interleave(clip1,clip2) | ||

| + | converttoyv12 | ||

</nowiki></pre> | </nowiki></pre> | ||

| − | + | <br> | |

| + | |||

| + | =====F2 - Half framerate - high resolution===== | ||

| + | |||

| + | In certain cases gameplay will be in 30 fps(25 for PAL), but you still end up with interlaced video. This is because the fields shifted out of order, and in this case it's called combing. All you need to do is shift the fields back with their proper frame and it'll be deinterlaced, or decombed. Sounds simple, but usually these games just seem to be progressive at one point, and combed at another; almost as if it were random. This is fixed by using the Decomb filter with the Telecide() command. | ||

| + | |||

| + | <pre><nowiki>### Use complementparity if the motion of the video seems to go back and forth. | ||

| + | #ComplementParity() | ||

| + | Telecide()</nowiki></pre> | ||

| + | |||

| + | With games that run at half framerate, there might be certain small parts in the game that are actually at full framerate such as menu screens. Since they are usually just a small part of gameplay, you can just ignore it and let the Decomb filter deinterlace it. | ||

| + | |||

| + | You can also manually tell the Decomb filter to decomb certain parts. You can do so by creating a file called decomb.tel in the same directory as the avs file. First change your Telecide() command. | ||

| + | |||

| + | <pre><nowiki> | ||

| + | Telecide(ovr="decomb.tel")</nowiki></pre> | ||

| + | |||

| + | Now open up decomb.tel and tell it what frames you want matched. For example: | ||

| + | |||

| + | <pre><nowiki> | ||

| + | 100,200 n | ||

| + | 500,600 n</nowiki></pre> | ||

| + | |||

| + | This says frames 100-200 and 500-600 will be matched with the next. Use p for the previous frame and c for the current frame. If you're not sure, just try them all out. | ||

| + | |||

| + | <br> | ||

| + | |||

| + | =====F2 - Half framerate - low resolution===== | ||

| + | |||

| + | This is the easiest kind of deinterlacing. You barely even have to think about it. Looking at the picture above, notice all the individual horizontal lines. These show the fields. Every even numbered line is one field, while every odd numbered line is the other field. With half framerate we simply remove one of the fields. This reduces the height of the video by half, we'll take care of the width later on when resizing. | ||

| + | |||

| + | <pre><nowiki> | ||

| + | separatefields() | ||

| + | selecteven() | ||

| + | </nowiki></pre> | ||

| + | |||

| + | =====F3 - One third framerate===== | ||

| + | |||

| + | Used for games that have problems with flickering sprites, typically what you see in older games. This is probably the trickiest kind of deinterlacing, so you may want to consult with others in the [http://speeddemosarchive.com/forum/index.php/board,5.0.html Tech Support forum]. | ||

<pre><nowiki> | <pre><nowiki> | ||

### Use complementparity if the motion of the video seems to go back and forth. | ### Use complementparity if the motion of the video seems to go back and forth. | ||

#ComplementParity() | #ComplementParity() | ||

| − | + | separatefields() | |

| + | selectevery(3) | ||

</nowiki></pre> | </nowiki></pre> | ||

| + | |||

| + | http://www.avisynth.org/SelectEvery | ||

<br> | <br> | ||

| + | |||

===Part 6: Trimming=== | ===Part 6: Trimming=== | ||

| − | Trim is used to cut out frames. The numbers inside the brackets represent the range of frames you want to keep | + | Trim is used to cut out frames. The numbers inside the brackets represent the range of frames you want to keep and are inclusive. To make it easier to find the frame range numbers, load the avs file into VirtualDub(Mod) since it displays the current frame number. |

| − | + | Note that deinterlacing methods such as leakkernelbob and mvbob will double the amount of frames in your video. If you figure out the frame range before deinterlacing, but then mvbob it, you'll have to double the numbers. For example, the following two are equivalent: | |

| − | + | ||

| − | + | <pre><nowiki>avisource("dmc3_1.avi") | |

| − | </nowiki></pre> | + | trim(106,31200) |

| + | mvbob()</nowiki></pre> | ||

| + | <pre><nowiki>avisource("dmc3_1.avi") | ||

| + | mvbob() | ||

| + | trim(212,62400)</nowiki></pre> | ||

| − | < | + | Selecteven() will grab all even numbered frames, and discard the odd numbered. The following are equivalent, as well: |

| + | <pre><nowiki>avisource("dmc3_1.avi") | ||

| + | trim(106,31200) | ||

| + | selecteven()</nowiki></pre> | ||

| + | <pre><nowiki>avisource("dmc3_1.avi") | ||

| + | selecteven() | ||

| + | trim(53,15600)</nowiki></pre> | ||

| + | |||

| + | You can also combine trim commands. | ||

| + | |||

| + | <pre><nowiki>Trim(100,35000)++Trim(36000,50000) | ||

| + | </nowiki></pre> | ||

http://www.avisynth.org/Trim | http://www.avisynth.org/Trim | ||

| Line 206: | Line 396: | ||

<font color="red"><b>Rule 1:</b></font> Never resize to a resolution greater than that of the original source video. This is called "stretching" and does nothing to increase the quality of your video. | <font color="red"><b>Rule 1:</b></font> Never resize to a resolution greater than that of the original source video. This is called "stretching" and does nothing to increase the quality of your video. | ||

| − | <font color="red"><b>Rule 2:</b></font> | + | <font color="red"><b>Rule 2:</b></font> Never resize to a resolution greater than that of the original game! You may have captured that NES game at full resolution, but it truly plays at low resolution. |

| + | |||

| + | <b>Correcting the aspect ratio</b> | ||

| + | |||

| + | The NTSC DVD format is 720x480, this is an aspect ratio of 1.5:1 while your game probably play at a ratio of 1.33:1, or 4:3. The correct resolution is 640x480. | ||

<b>Resizing for HQ/IQ full resolution:</b> | <b>Resizing for HQ/IQ full resolution:</b> | ||

| Line 231: | Line 425: | ||

lanczos4resize(352,288) | lanczos4resize(352,288) | ||

</nowiki></pre> | </nowiki></pre> | ||

| + | |||

| + | Be sure to read Part 8: Sharpening since resizing can possibly make the picture too blurry. | ||

| + | |||

http://www.avisynth.org/Resize<br> | http://www.avisynth.org/Resize<br> | ||

| Line 239: | Line 436: | ||

===Part 8: Sharpening=== | ===Part 8: Sharpening=== | ||

| − | + | When a picture or video has gone through significant resizing you usually end up with a blurry image. This is where sharpening comes in. Those who recorded their computer game with screen capture software are pretty much guaranteed a blurry image when resizing to the MQ resolution. Same with those who chose to deinterlace their video to the MQ resolution with the mvbob + resize method. Do not go overboard with the sharpening, play with the values until it looks right. | |

Since XSharpen works in the YV12 colorspace, you will have to convert it first. | Since XSharpen works in the YV12 colorspace, you will have to convert it first. | ||

| Line 251: | Line 448: | ||

<br> | <br> | ||

===Part 9: Cropping / Adding borders=== | ===Part 9: Cropping / Adding borders=== | ||

| + | |||

| + | You may be tempted to crop out the black border of console recorded footage. It's best to leave them in, otherwise you're going to make more work for yourself dealing with strange resolutions, encoders not accepting them, and possibly using the resize command later only to get an incorrect aspect ratio. Cropping should be used sparingly. | ||

The following code corresponds to the illustration. | The following code corresponds to the illustration. | ||

| Line 261: | Line 460: | ||

| − | You will probably not use the crop and add border commands. A good example of its combined use is if you want to get rid of some noise at the bottom of the video. You would crop it away, then add the border back. Only do this if the noise is along the black border of the video, | + | You will probably not use the crop and add border commands. A good example of its combined use is if you want to get rid of some noise at the bottom of the video. You would crop it away, then add the border back. Only do this if the noise is along the black border of the video, since you don't want to crop away gameplay footage. |

<pre><nowiki> | <pre><nowiki> | ||

| Line 269: | Line 468: | ||

<br> | <br> | ||

| + | |||

===Part 10: Color / Brightness=== | ===Part 10: Color / Brightness=== | ||

| − | Be very careful when playing around with color and brightness. If your video is too bright and looks greyish it will be rejected. Feel free to ask others in the [http://speeddemosarchive.com/ | + | Be very careful when playing around with color and brightness. If your video is too bright and looks greyish it will be rejected. Feel free to ask others in the [http://speeddemosarchive.com/forum/index.php/board,5.0.html Tech Support forum] for their opinions about your video. |

http://www.avisynth.org/Tweak | http://www.avisynth.org/Tweak | ||

http://www.avisynth.org/Levels | http://www.avisynth.org/Levels | ||

| + | |||

| + | http://forum.doom9.org/showthread.php?t=93571 | ||

<br> | <br> | ||

| + | |||

===Part 11: SDA StatID=== | ===Part 11: SDA StatID=== | ||

| Line 286: | Line 489: | ||

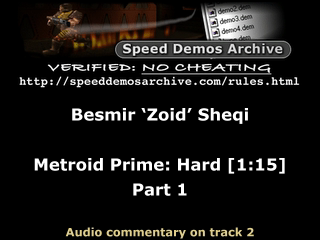

[[Image:StatIDexample.png]] | [[Image:StatIDexample.png]] | ||

| − | SDA realizes that those who encode their own runs and need manual timing can't show the time in the StatID, since final encodes are | + | SDA realizes that those who encode their own runs and need manual timing can't show the time in the StatID, since final encodes are submitted to SDA <i>and then</i> timed. Until a solution is found, just make one without the time. A partial StatID is better than none. |

| − | You will need to install [http://sourceforge.net/project/showfiles.php?group_id=57023 Avisynth 2.5.7 | + | You will need to install [http://sourceforge.net/project/showfiles.php?group_id=57023 Avisynth 2.5.7] or later in order for the script to work. You also need to download the [[Media:statidheader.png|StatID header]] and place it in the same folder as your avisynth script, or make sure the paths are correct in the script. |

The script is designed to work with any source file at any resolution and at any framerate. The only thing you need to change is the subtitle. The \n indicates a new line. Place the code at the end of your script. | The script is designed to work with any source file at any resolution and at any framerate. The only thing you need to change is the subtitle. The \n indicates a new line. Place the code at the end of your script. | ||

<pre><nowiki> | <pre><nowiki> | ||

| − | #StatID version 1. | + | ###StatID version 1.5 |

| − | + | mySubtitle="""Besmir ‘Zoid’ Sheqi\n\nMetroid Prime: Hard [1:15]\nPart 1""" | |

| − | + | template=last | |

| − | FontSize = ( | + | StatIDheader = (template.width < 640 ? ImageSource("statidheader.png").lanczos4resize(template.width,round(172.0*template.width/640.0)) : ImageSource("statidheader.png")) |

| − | StatID = Blankclip( | + | FontSize = round(template.width * 0.05 + 4) |

| − | StatID = Overlay(StatID, | + | StatID = Blankclip(template,length=round(template.framerate*5)) |

| − | StatID = Subtitle(StatID, | + | StatID = Overlay(StatID,StatIDheader,x=(template.width-StatIDheader.width)/2,y=2) |

| + | StatID = Subtitle(StatID,mySubtitle,font="Verdana",size=FontSize,text_color=$FFFFFF,align=5,lsp=40) | ||

| + | #StatID = Subtitle(StatID,"Audio commentary on track 2",font="Verdana",size=round(FontSize*0.7),text_color=$E1CE8B,align=2) | ||

| + | StatID = StatID.ConvertToYV12() | ||

| − | StatID++ | + | StatID++last++StatID |

| − | + | ||

</nowiki></pre> | </nowiki></pre> | ||

| − | + | Try to understand the concept behind the line "template=last" in the StatID. When creating the StatID from scratch, how do we know what resolution and framerate to use? We can't just put anything, otherwise we won't be able to join the StatID with the actual gameplay. One way is to manually input the exact values in the whole script. A better way is to make AviSynth look at what you've already set up (the gameplay), and extract the information needed. So, the "last" thing being worked on goes into a "template" and the script uses that. | |

| + | |||

| + | Now that you understand the concept, note that you might not use "last" in "template=last". Same goes for the "last" in "StatID++last++StatID" (StatID is repeated at the end to try to fool pirates who cut off the StatID and steal the video). Take sample script #2 as an example. | ||

<br> | <br> | ||

| + | <b>Audio Commentary</b> | ||

| + | |||

| + | If you are adding audio commentary to your run you may wish to add a note at the bottom of the StatID stating this. You will notice in the script that one line has been commented out with a single # sign. Removing it will enable the new subtitle. | ||

| + | |||

| + | <br> | ||

| + | ===Part 12: QuickTime compatibility=== | ||

| + | |||

| + | You wouldn't expect that something in AviSynth could cause problems with QuickTime; usually it's a result of incorrect encoding. Mp4 files store a value called 'timescale' that defines the rate at which the video should play. Sometimes AviSynth can cause the timescale to go as high as 10000000 instead of a more normal 2997 and breaks playback in QuickTime. Thankfully, there's an easy way to ensure that this won't happen. Add this piece of code at the end of your script. | ||

| + | |||

| + | <pre><nowiki>changefps(last.framerate)</nowiki></pre> | ||

| + | |||

| + | <br> | ||

| + | |||

==Sample scripts== | ==Sample scripts== | ||

| Line 313: | Line 533: | ||

<pre><nowiki> | <pre><nowiki> | ||

Loadplugin("C:\Program Files\DGMPGDec\DGDecode.dll") | Loadplugin("C:\Program Files\DGMPGDec\DGDecode.dll") | ||

| − | |||

| − | |||

| − | Ac3Source(MPEG2source("segment3.d2v"),"segment3 192Kbps DELAY -66ms.ac3").DelayAudio(-0.066) | + | Ac3Source(MPEG2source("segment3.d2v",upConv=1),"segment3 192Kbps DELAY -66ms.ac3").DelayAudio(-0.066) |

LeakKernelBob(order=1,threshold=10,sharp=true,twoway=true,map=false) | LeakKernelBob(order=1,threshold=10,sharp=true,twoway=true,map=false) | ||

Trim(588,37648) | Trim(588,37648) | ||

| Line 328: | Line 546: | ||

<pre><nowiki> | <pre><nowiki> | ||

Loadplugin("C:\Program Files\DGMPGDec\DGDecode.dll") | Loadplugin("C:\Program Files\DGMPGDec\DGDecode.dll") | ||

| − | |||

| − | |||

| − | seg1 = Ac3Source(MPEG2source("segment1.d2v"),"segment1 192Kbps DELAY -66ms.ac3").DelayAudio(-0.066) | + | seg1 = Ac3Source(MPEG2source("segment1.d2v",upConv=1),"segment1 192Kbps DELAY -66ms.ac3").DelayAudio(-0.066) |

| − | seg1.separatefields().selecteven() | + | seg1 = seg1.separatefields().selecteven() |

| − | seg1.Trim(76,109763) | + | seg1 = seg1.Trim(76,109763) |

| − | seg1.lanczos4resize(320,240) | + | seg1 = seg1.lanczos4resize(320,240) |

| − | seg2 = Ac3Source(MPEG2source("segment2.d2v"),"segment2 192Kbps DELAY -32ms.ac3").DelayAudio(-0.032) | + | seg2 = Ac3Source(MPEG2source("segment2.d2v",upConv=1),"segment2 192Kbps DELAY -32ms.ac3").DelayAudio(-0.032) |

| − | seg2.separatefields().selecteven() | + | seg2 = seg2.separatefields().selecteven() |

| − | seg2.Trim(143,76875) | + | seg2 = seg2.Trim(143,76875) |

| − | seg2.lanczos4resize(320,240) | + | seg2 = seg2.lanczos4resize(320,240) |

| − | #StatID version 1. | + | #StatID version 1.5 |

| − | + | template=seg1 | |

| + | mySubtitle="""Besmir ‘Zoid’ Sheqi\n\nMetroid Prime: Hard [1:15]\nPart 1""" | ||

| − | + | StatIDheader = (template.width < 640 ? ImageSource("statidheader.png").lanczos4resize(template.width,round(172.0*template.width/640.0)) : ImageSource("statidheader.png")) | |

| − | FontSize = ( | + | FontSize = round(template.width * 0.05 + 4) |

| − | StatID = Blankclip( | + | StatID = Blankclip(template,length=round(template.framerate*5)) |

| − | StatID = Overlay(StatID, | + | StatID = Overlay(StatID,StatIDheader,x=(template.width-StatIDheader.width)/2,y=2) |

| − | StatID = Subtitle(StatID, | + | StatID = Subtitle(StatID,mySubtitle,font="Verdana",size=FontSize,text_color=$FFFFFF,align=5,lsp=40) |

| + | #StatID = Subtitle(StatID,"Audio commentary on track 2",font="Verdana",size=round(FontSize*0.7),text_color=$E1CE8B,align=2) | ||

| + | StatID = StatID.ConvertToYV12() | ||

| − | StatID++seg1++seg2 | + | StatID++seg1++seg2++StatID |

ConvertToYV12() | ConvertToYV12() | ||

</nowiki></pre> | </nowiki></pre> | ||

| − | <b> | + | <b><font color="#FF9900">2a - Alternative code, same functionality.</font> DVD source, two segments appended, for LQ and MQ DivX/Xvid, statid</b> |

<pre><nowiki> | <pre><nowiki> | ||

| − | + | Loadplugin("C:\Program Files\DGMPGDec\DGDecode.dll") | |

| + | Ac3Source(MPEG2source("segment1.d2v",upConv=1),"segment1 192Kbps DELAY -66ms.ac3").DelayAudio(-0.066) | ||

| + | separatefields() | ||

| + | selecteven() | ||

| + | Trim(76,109763) | ||

| + | lanczos4resize(320,240) | ||

| + | |||

| + | seg1=last | ||

| + | |||

| + | Ac3Source(MPEG2source("segment2.d2v",upConv=1),"segment2 192Kbps DELAY -32ms.ac3").DelayAudio(-0.032) | ||

| + | separatefields() | ||

| + | selecteven() | ||

| + | Trim(143,76875) | ||

| + | lanczos4resize(320,240) | ||

| + | |||

| + | seg2=last | ||

| + | |||

| + | AlignedSplice(seg1,seg2) | ||

| + | |||

| + | #StatID version 1.5 | ||

| + | mySubtitle="""Besmir ‘Zoid’ Sheqi\n\nMetroid Prime: Hard [1:15]\nPart 1""" | ||

| + | |||

| + | template=last | ||

| + | StatIDheader = (template.width < 640 ? ImageSource("statidheader.png").lanczos4resize(template.width,round(172.0*template.width/640.0)) : ImageSource("statidheader.png")) | ||

| + | FontSize = round(template.width * 0.05 + 4) | ||

| + | StatID = Blankclip(template,length=round(template.framerate*5)) | ||

| + | StatID = Overlay(StatID,StatIDheader,x=(template.width-StatIDheader.width)/2,y=2) | ||

| + | StatID = Subtitle(StatID,mySubtitle,font="Verdana",size=FontSize,text_color=$FFFFFF,align=5,lsp=40) | ||

| + | #StatID = Subtitle(StatID,"Audio commentary on track 2",font="Verdana",size=round(FontSize*0.7),text_color=$E1CE8B,align=2) | ||

| + | StatID = StatID.ConvertToYV12() | ||

| + | |||

| + | StatID++last++StatID | ||

| + | ConvertToYV12() | ||

| + | </nowiki></pre> | ||

| + | |||

| + | <b>3. Fraps source, split parts, one segment, for LQ and MQ, statid</b> | ||

| + | <pre><nowiki> | ||

avisource("seg7_part1.avi")++avisource("seg7_part2.avi")++avisource("seg7_part3.avi") | avisource("seg7_part1.avi")++avisource("seg7_part2.avi")++avisource("seg7_part3.avi") | ||

Trim(439,60938) | Trim(439,60938) | ||

| Line 365: | Line 620: | ||

XSharpen(35,40) # A little sharper than default 30,40 | XSharpen(35,40) # A little sharper than default 30,40 | ||

| − | #StatID version 1. | + | #StatID version 1.5 |

| − | + | mySubtitle="""Besmir ‘Zoid’ Sheqi\n\nMetroid Prime: Hard [1:15]\nPart 1""" | |

| − | + | template=last | |

| − | FontSize = ( | + | StatIDheader = (template.width < 640 ? ImageSource("statidheader.png").lanczos4resize(template.width,round(172.0*template.width/640.0)) : ImageSource("statidheader.png")) |

| − | StatID = Blankclip( | + | FontSize = round(template.width * 0.05 + 4) |

| − | StatID = Overlay(StatID, | + | StatID = Blankclip(template,length=round(template.framerate*5)) |

| − | StatID = Subtitle(StatID, | + | StatID = Overlay(StatID,StatIDheader,x=(template.width-StatIDheader.width)/2,y=2) |

| + | StatID = Subtitle(StatID,mySubtitle,font="Verdana",size=FontSize,text_color=$FFFFFF,align=5,lsp=40) | ||

| + | #StatID = Subtitle(StatID,"Audio commentary on track 2",font="Verdana",size=round(FontSize*0.7),text_color=$E1CE8B,align=2) | ||

| + | StatID = StatID.ConvertToYV12() | ||

| − | StatID++ | + | StatID++last++StatID |

ConvertToYV12() | ConvertToYV12() | ||

</nowiki></pre> | </nowiki></pre> | ||

| Line 384: | Line 642: | ||

c = avisource("seg3_1.avi")++avisource("seg3_2.avi") | c = avisource("seg3_1.avi")++avisource("seg3_2.avi") | ||

| − | a.trim(100,20877) | + | a = a.trim(100,20877) ++ a.trim(21000,30000) |

| − | b.trim(16,10988) | + | b = b.trim(16,10988) |

| − | c.trim(875,13000) | + | c = c.trim(875,13000) |

AlignedSplice(a,b,c) # Same as a++b++c | AlignedSplice(a,b,c) # Same as a++b++c | ||

| Line 396: | Line 654: | ||

<pre><nowiki> | <pre><nowiki> | ||

Loadplugin("C:\Program Files\DGMPGDec\DGDecode.dll") | Loadplugin("C:\Program Files\DGMPGDec\DGDecode.dll") | ||

| − | |||

| − | Ac3Source(MPEG2source("segment1.d2v"),"segment1 192Kbps DELAY -48ms.ac3").DelayAudio(-0.048) | + | Ac3Source(MPEG2source("segment1.d2v",upConv=1),"segment1 192Kbps DELAY -48ms.ac3").DelayAudio(-0.048) |

mvbob() | mvbob() | ||

Tweak(bright=10,cont=1.0) | Tweak(bright=10,cont=1.0) | ||

| Line 410: | Line 667: | ||

<pre><nowiki> | <pre><nowiki> | ||

Loadplugin("C:\Program Files\DGMPGDec\DGDecode.dll") | Loadplugin("C:\Program Files\DGMPGDec\DGDecode.dll") | ||

| − | |||

| − | Ac3Source(MPEG2source("segment1.d2v"),"segment1 192Kbps DELAY -48ms.ac3").DelayAudio(-0.048) | + | Ac3Source(MPEG2source("segment1.d2v",upConv=1),"segment1 192Kbps DELAY -48ms.ac3").DelayAudio(-0.048) |

separatefields() | separatefields() | ||

selecteven() | selecteven() | ||

| Line 421: | Line 677: | ||

ConvertToYV12() | ConvertToYV12() | ||

</nowiki></pre> | </nowiki></pre> | ||

| + | |||

| + | <b>6. DVD source, one segment, for HQ and IQ, deinterlacers: mvbob and telecide, brightness correction, no statid</b> | ||

| + | <pre><nowiki> | ||

| + | Loadplugin("C:\Program Files\DGMPGDec\DGDecode.dll") | ||

| + | |||

| + | Ac3Source(MPEG2source("SEG7_5155.d2v",upConv=1),"SEG7_5155_DELAY-73ms.ac3").DelayAudio(-0.073) | ||

| + | Tweak(bright=10,cont=1.0) | ||

| + | |||

| + | assumetff() | ||

| + | |||

| + | # MvBob is used for gameplay moments where the game runs at 60 fps. | ||

| + | # Telecide is used for cutscenes running at 30 fps. Framerate is | ||

| + | # doubled to match video "a". There are three cutscenes in this segment. | ||

| + | # "last" refers to the ac3source line above. | ||

| + | |||

| + | a=last.Trim(0,1720).mvbob() | ||

| + | b=last.Trim(1721,4080).telecide().changefps(a) | ||

| + | c=last.Trim(4081,11480).mvbob() | ||

| + | d=last.Trim(11481,12455).telecide().changefps(a) | ||

| + | e=last.Trim(12456,13485).mvbob() | ||

| + | f=last.Trim(13486,16680).telecide().changefps(a) | ||

| + | g=last.Trim(16681,17200).mvbob() | ||

| + | |||

| + | AlignedSplice(a,b,c,d,e,f,g) | ||

| + | |||

| + | Lanczos4Resize(640,480) | ||

| + | |||

| + | ConvertToYV12() | ||

| + | </nowiki></pre> | ||

| + | |||

| + | ==Script verification== | ||

| + | |||

| + | ===When you think you're done=== | ||

| + | |||

| + | If you're reading this guide, you're probably a beginner at AviSynth scripting and should check if your scripts are done properly. Make a topic on the [http://speeddemosarchive.com/forum/index.php/board,5.0.html Tech Support forum], tell us what game you're running, the console, whether it's NTSC or PAL, [[AviSynth#Determining_the_framerate_of_the_game|the framerate of the game]], and of course the AviSynth scripts. | ||

| + | |||

| + | |||

| + | <font color="green"><b>Note:</b> When you go through the MeGUI guide and post video samples in the forum, they may reveal mistakes in your scripts that we simply couldn't catch from the scripts alone. The goal here is to avoid the more obvious mistakes. So be prepared to make changes to your scripts later on.</font> | ||

| + | |||

| + | <br> | ||

| + | |||

| + | ===When you know you're done=== | ||

| + | |||

| + | If you are sure that your AviSynth script is done properly, you are ready to compress to [[MeGUI|H.264]] or [[XviD / mp3 with MeGUI|XviD]]. | ||

Latest revision as of 22:50, 10 September 2013

Contents

- 1 Introduction

- 2 Installation / plugins

- 3 The AviSynth script

- 3.1 Part 1: Loading the plugins

- 3.2 Part 2: Loading the source files

- 3.3 Part 3: Fixing audio delay

- 3.4 Part 4: Appending

- 3.5 Part 5: Deinterlacing / Full framerate video

- 3.6 Part 6: Trimming

- 3.7 Part 7: Resizing

- 3.8 Part 8: Sharpening

- 3.9 Part 9: Cropping / Adding borders

- 3.10 Part 10: Color / Brightness

- 3.11 Part 11: SDA StatID

- 3.12 Part 12: QuickTime compatibility

- 4 Sample scripts

- 5 Script verification

Introduction

IMPORTANT NOTE: AviSynth scripting is an advanced topic. All beginners are recommended to use Anri-chan instead.

To put it briefly, AviSynth is a video editor like VirtualDub except everything is done with scripts. For details, check Wikipedia. The most important thing to learn right now is the concept of AviSynth.

Let's say you're still using VirtualDub:

- You go through the menu or drag and drop your source video inside the program.

- You load the audio.

- You use the brackets to cut off frames you don't need.

- You go to the filters section and use resize.

- Resizing has made the picture blurry, so you add the sharpen filter.

You did all that by moving your mouse, going through menus, etc. With AviSynth you're creating a text-based file (.avs) to tell it what to do with text commands. The above would be, as an example:

- avisource("myvideo.avi")

- wavsource("myaudio.wav")

- Trim(4000,7000)

- Lanczos4Resize(320,240)

- XSharpen(30,40)

You save the avs file, and load that into MeGUI or VirtualDub for final compression. The advantages are that you don't have go through as many menus, you don't have to remember which frames you want to cut out, you have access to more advanced deinterlacing filters like mvbob, and you can keep your scripts forever so that you don't have to start from scratch in case you want to re-encode them later.

How to use this guide.

Yes, AviSynth can be confusing and hard to learn, but it is very rewarding once you get the hang of it. I suggest you look at the sample scripts at the bottom of the page to get an idea of what a final script looks like. Then go through each section putting whatever you need into your own script. If you are having trouble, and you probably will >:), do not hesitate to ask for help in the Tech Support forum.

Installation / plugins

Go to http://www.avisynth.org/ or http://sourceforge.net/project/showfiles.php?group_id=57023 to download AviSynth 2.5.7. Note that Part 11: SDA StatID cannot be completed with version 2.5.6 or lower.

Download these necessary avisynth plugins.

With AviSynth installed, go to Start menu -> [All] Programs -> AviSynth -> Plugin Directory. This will open the directory where AviSynth stores its plugins. Copy the files from inside the AviSynth plugins zip file to the Avisynth plugins directory window you just opened. Note that you can't copy the directory you unzipped in; you have to copy the files inside the directory you unzipped into the AviSynth plugins directory. This is because AviSynth won't recognize the plugins if they are inside a directory inside the AviSynth plugins directory.

If AviSynth complains that the MSVCR71.dll file is missing, you can download it at http://www.dll-files.com/. Place it in your "C:\WINDOWS\SYSTEM32\" directory.

You'll also want to have VirtualDub to test your script as you go along.

The AviSynth script

Create a .txt document with a name of your choice in the same folder as your source files. Rename the extension from .txt to .avs. If you can't see the extension and are running Windows, open Windows Explorer, go to Tools -> Folder Options -> View and uncheck "Hide extensions for known filetypes".

Open the avs file in Notepad or any text editor.

Tip: You should create separate AviSynth scripts for each quality version that has differences between them. There's no sense in creating one AviSynth script, encoding it, editing the script, encoding again, etc. With separate AviSynth scripts you'll get less script errors and less headaches, and you'll be able to queue many encodes.

Part 1: Loading the plugins

AviSynth will automatically load any dll and avsi files located in the plugins directory. If you're working with DVD source material, the only plugin that I recommend you load manually is DGDecode.dll since it's in its own folder. You can of course just copy and paste it into the plugins folder, but be sure to re-copy it if you update DGMPGDec. Change the file path if needed.

Loadplugin("C:\Program Files\DGMPGDec\DGDecode.dll") # For DVD source

#### For MvBob deinterlacing. Remove the # to load it.

#import("C:\Program Files\AviSynth 2.5\plugins\mvbob.avs")

Part 2: Loading the source files

There are different ways to load the source files and it all depends on what it is you're working with. Here is a list of available commands.

- avisource(video.avi)

- directshowsource(video)

- MPEG2source(video.d2v)

- Ac3source(video, sound.ac3)

- Wavsource(sound.wav)

- Mpasource(sound.mpa)

- AudioDub(video, sound)

If you used a capture card or screen capture software (e.g., Fraps, Camtasia) then it is quite simple to load the files. If avisource does not work, try directshowsource. If you are using Camtasia and chose to use its own Techsmith codec, then use directshowsource.

### Video and audio are already combined

avisource("video.avi")

### Video and audio are split

#AudioDub(avisource("video.avi"), wavsource("audio.wav"))

Notice the # before AudioDub. This is telling AviSynth to skip over the line. If you need to use this line, remove the # and add one before the first avisource command.

Tip: If your avs script file is in a different folder than your source files, just use relative or absolute paths, like "c:\my work folder\video.avi".

Tip: If your video is separated in multiple parts, which is usually the case when recording with Fraps, then be sure to look at part 4 since it is linked to part 2.

If you used a DVD recorder then your video and audio is most likely split. Make sure you've gone over the DVD page before continuing.

AC3source(MPEG2source("vob.d2v",upConv=1),"vob T01 2_0ch 192Kbps DELAY -66ms.ac3")

#AudioDub(MPEG2source("vob.d2v",upConv=1),MPASource("vob T01 2_0ch 192Kbps DELAY -66ms.mpa"))

I hope you haven't removed the delay information from the sound files. Not that it's the end of the world if you did remove it, you'll just have to listen by ear until you get a close value with DelayAudio().

Part 3: Fixing audio delay

Constant audio desync

Constant desync is when the desync at the beginning of the video is the same as the desync at the end of the video.

The DelayAudio command is straightforward, but there is an extra concept worth learning. Look at the following two scripts:

Ac3Source(MPEG2source("vob.d2v",upConv=1),"vob DELAY -66ms.ac3")

DelayAudio(-0.066)

Ac3Source(MPEG2source("vob.d2v",upConv=1),"vob DELAY -66ms.ac3").DelayAudio(-0.066)

Is there a difference between them? Yes and no. They both get the same result, however the script with one line makes it easier for projects where you append files together. I suggest using the format of the second script.

Progressive audio desync

Progressive (gradual) desync is when the desync at the beginning of the video is not the same as the desync at the end of the video. For example, the audio seems fine but then later on someone fires his gun and you hear the bang a second later. This type of desync can be hard to fix since you usually have to figure out the correct desync value manually. THE DESYNC MUST BE GRADUAL! Do not apply to audio that has an abrupt desync somewhere along the timeline.

#Positive value stretches audio, negative value shrinks audio. Value in milliseconds. stretch=-300 #Do not change the line below. TimeStretch(tempo=(last.AudioLengthF/(last.AudioLengthF+(stretch*(last.audiorate/1000)))*100))

Tip: Preview avisynth scripts in Media Player Classic

Part 4: Appending

One of the best features of AviSynth is its ability to do an aligned splice when appending video. There is usually a mismatch between the length of the video and the length of the audio, typically ranging from -50 ms to +50 ms. This means that appending files in VirtualDub(Mod) will in almost all cases cause a desync because the audio of clip2 will be appended right after clip1. VirtualDub(Mod) is unable to do an aligned splice. Here is an illustration:

You will probably never use UnalignedSplice. Here are different methods for using AlignedSplice:

### Method 1

AlignedSplice(avisource("clip1.avi"), avisource("clip2.avi"), avisource("clip3.avi"))

### Method 2

a = avisource("clip1.avi")

b = avisource("clip2.avi")

c = avisource("clip3.avi")

AlignedSplice(a,b,c)

### Method 3

avisource("clip1.avi")++avisource("clip2.avi")++avisource("clip3.avi")

Ac3Source(MPEG2source("vob1.d2v",upConv=1),"vob1 DELAY -66ms.ac3").DelayAudio(-0.066)++Ac3Source(MPEG2source("vob2.d2v",upConv=1),"vob2 DELAY -30ms.ac3").DelayAudio(-0.030)

### Method 4

import("script1.avs")++import("script2.avs")++import("script3.avs")

Method 2 is good for when you're joining a lot of clips since it's easier to edit. Notice the double plus signs in method 3; this is the same as AlignedSplice. One plus sign would indicate UnalignedSplice. Method 4 is to join independent AviSynth scripts.

Part 5: Deinterlacing / Full framerate video

Deinterlacing isn't needed for video that is not interlaced such as computer games recorded with Fraps/Camtasia, or if your console game outputs in progressive mode and you captured in progressive mode as well. Otherwise, if your footage looks anything like the picture below with the horizontal lines, then you definitely need to deinterlace.

Tip: The SelectEven() command used in this section is still useful for those with beefy computers who recorded their computer game at 60 fps. The command will get you half framerate (30 fps) needed for LQ/MQ.

What is deinterlacing?

It's important that you understand why you'll be using whichever deinterlacing method is needed for the video you recorded. Since I'm lazy, I'll briefly explain what deinterlacing does.

An interlaced frame has two fields. Think of it as a single frame with two smaller frames inside. Those two fields (the smaller frames) represent two different moments in time. Take the picture as an example, with four different fields, A,B,C,D:

When deinterlaced by separating the fields, we get:

This is how video that has been recorded at 29.97 fps interlaced can be deinterlaced to achieve 59.94 fps progressive.

You may be wondering: "doesn't splitting the fields squish the video?". Well, yes, but it's not a problem for low resolution video since we can just resize it horizontally. For high resolution, experts out there have created filters like telecide and mvbob to reconstruct the height.

Here's where it gets a little more complicated... Some games only run at half framerate (or even lower) but are still interlaced. So, instead of A/B,C/D becoming A,B,C,D for full framerate you get A/A,B/B becoming A,A,B,B. Obviously, there's no point in keeping the duplicate frames. These games are usually very easy to deinterlace with good results, but you have to learn how to determine the framerate of your game.

Determining the framerate of the game

First, check to see if your game is listed on this page. If it's not there, read on.

By this point in the guide, you should have a script similar to this:

Loadplugin("C:\Program Files\DGMPGDec\DGDecode.dll")

Ac3Source(MPEG2source("segment1.d2v",upConv=1),"segment1 192Kbps DELAY -48ms.ac3").DelayAudio(-0.048)

Add SeparateFields() at the end and save your script. Load this script into VirtualDub(Mod). You'll notice that the video has been squished vertically, don't worry about this.

To make it easier for you, move to a part of your video that has a lot of motion. Also make sure you're looking at actual gameplay, not a cutscene, since gameplay is much more important for speed runs. Use the buttons at the bottom of VirtualDub(Mod) to step through your video frame by frame. What you're looking for is difference in motion. If you see unique motion every single frame, then your game is full framerate. If you see unique motion every two frames, the game is half framerate. If it's every three frames, you've got one-third framerate.

For example, if U=unique and D=duplicate, you can write full framerate as a sequence of frames U,U,U,U,etc, and you can write half framerate as a sequence of frames U,D,U,D,etc.

There are some games that have a strange sequence of frames. We recently saw one in Tech Support for a PAL game that had a sequence of frames U,D,U,U,D,etc. If you get something strange like that, make a topic in Tech Support about it so we can look at it.

Before leaving this section, make sure you've determined whether your game is F1 (full framerate), F2 (half framerate) or F3 (third framerate).

Remove SeparateFields() from the script.

Determining the resolution of the game

If you're unfortunate enough to have recorded your game at a low resolution, then this section probably doesn't matter.

First, check to see if your game is listed on this page. If it's not there, read on.

This section requires a bit of your own research. Wikipedia is a good place to start. Older consoles like the NES and Sega Genesis can only output in low resolution, however the SNES, N64 and PS1 are capable of high resolution. From the PS2 and onwards, high resolution for games is more probable.

Deinterlacing

Refer to the Glossary of terms if needed.

Now that you know the framerate and resolution of your game, you can do some actual deinterlacing. Note that there are limitations on the quality versions that SDA produces.

- LQ for Xvid/H.264 must be half or third framerate, low resolution.

- MQ for Xvid must be half or third framerate, low resolution.

- MQ for H.264 can be any framerate you want, low resolution.

- HQ/IQ for Xvid/H.264 must be full framerate (if available), high resolution (if available).

Ask yourself which one you want to do, then follow the appropriate link/section:

F1 - Full framerate - high resolution

F1 - Full framerate - low resolution

F2 - Half framerate - high resolution

F2 - Half framerate - low resolution

F3 - One third framerate

F1 - Full framerate - high resolution

For full resolution deinterlacing leakkerneldeint or mvbob come into play. If you notice too many "lines," or interlacing artifacts, as we like to call them, then lower the threshold value. The negative effect to lowering this is that you end up with more jaggedy edges, and loss of details. Use what you think is best.

### You may have to set order to 0. Try both to see which one works. LeakKernelBob(order=1,threshold=10,sharp=true,twoway=true,map=false)

Those who want more quality at the cost of encoding speed can use mvbob instead of leakkernelbob. Nate uses this himself, so if you want to go the SDA way, go with mvbob. Beware; this thing is very, very slow. But it comes with a free Frogurt! You may also want to read this tip from General Advice to save time.

### Use complementparity if the motion of the video seems to go back and forth. #ComplementParity() ConvertToYUY2() mvbob()

Here are two pictures that show the difference between mvbob and LeakKernelBob. Loading the pages in two tabs and switching back and forth is recommended.

Note how LeakKernelBob tends to create artifacts near edges.

F1 - Full framerate - low resolution

Method 1 - Pros: Easy and fast. Cons: You'll notice a bobbing effect which is unpleasant to the eye. Harder for the encoder, bitrate usage is higher.

### Use complementparity if the motion of the video seems to go back and forth. #ComplementParity() separatefields()

Method 2 - Pros: Zero bobbing. Great for the encoder, bitrate usage is lower. Cons: Slightly blurry image from resizing. Slow because of the mvbob filter (don't even think about using leakkernelbob).

### Use complementparity if the motion of the video seems to go back and forth. #ComplementParity() mvbob() (RESIZE FILTER GOES HERE, check part 7) (SHARPENING FILTER GOES HERE, check part 8)

Method 2 is commonly used for Game Boy footage. Sharpening is probably a good idea, too, but don't go overboard.

Method 3 - This method eliminates bobbing by shifting fields. It is much faster than method 2, but may not work as well for some types of video. Try both on a sample and if the difference is unnoticeable, use this method.

separatefields() converttorgb32 clip1=SelectEven.Crop(0,0,0,last.height).AddBorders(0,0,0,0) clip2=SelectOdd.Crop(0,0,0,last.height-1).AddBorders(0,1,0,0) Interleave(clip1,clip2) converttoyv12

F2 - Half framerate - high resolution

In certain cases gameplay will be in 30 fps(25 for PAL), but you still end up with interlaced video. This is because the fields shifted out of order, and in this case it's called combing. All you need to do is shift the fields back with their proper frame and it'll be deinterlaced, or decombed. Sounds simple, but usually these games just seem to be progressive at one point, and combed at another; almost as if it were random. This is fixed by using the Decomb filter with the Telecide() command.

### Use complementparity if the motion of the video seems to go back and forth. #ComplementParity() Telecide()

With games that run at half framerate, there might be certain small parts in the game that are actually at full framerate such as menu screens. Since they are usually just a small part of gameplay, you can just ignore it and let the Decomb filter deinterlace it.

You can also manually tell the Decomb filter to decomb certain parts. You can do so by creating a file called decomb.tel in the same directory as the avs file. First change your Telecide() command.

Telecide(ovr="decomb.tel")

Now open up decomb.tel and tell it what frames you want matched. For example:

100,200 n 500,600 n

This says frames 100-200 and 500-600 will be matched with the next. Use p for the previous frame and c for the current frame. If you're not sure, just try them all out.

F2 - Half framerate - low resolution

This is the easiest kind of deinterlacing. You barely even have to think about it. Looking at the picture above, notice all the individual horizontal lines. These show the fields. Every even numbered line is one field, while every odd numbered line is the other field. With half framerate we simply remove one of the fields. This reduces the height of the video by half, we'll take care of the width later on when resizing.

separatefields() selecteven()

F3 - One third framerate

Used for games that have problems with flickering sprites, typically what you see in older games. This is probably the trickiest kind of deinterlacing, so you may want to consult with others in the Tech Support forum.

### Use complementparity if the motion of the video seems to go back and forth. #ComplementParity() separatefields() selectevery(3)

http://www.avisynth.org/SelectEvery

Part 6: Trimming

Trim is used to cut out frames. The numbers inside the brackets represent the range of frames you want to keep and are inclusive. To make it easier to find the frame range numbers, load the avs file into VirtualDub(Mod) since it displays the current frame number.

Note that deinterlacing methods such as leakkernelbob and mvbob will double the amount of frames in your video. If you figure out the frame range before deinterlacing, but then mvbob it, you'll have to double the numbers. For example, the following two are equivalent:

avisource("dmc3_1.avi")

trim(106,31200)

mvbob()

avisource("dmc3_1.avi")

mvbob()

trim(212,62400)

Selecteven() will grab all even numbered frames, and discard the odd numbered. The following are equivalent, as well:

avisource("dmc3_1.avi")

trim(106,31200)

selecteven()

avisource("dmc3_1.avi")

selecteven()

trim(53,15600)

You can also combine trim commands.

Trim(100,35000)++Trim(36000,50000)

Part 7: Resizing

Rule 1: Never resize to a resolution greater than that of the original source video. This is called "stretching" and does nothing to increase the quality of your video.

Rule 2: Never resize to a resolution greater than that of the original game! You may have captured that NES game at full resolution, but it truly plays at low resolution.

Correcting the aspect ratio

The NTSC DVD format is 720x480, this is an aspect ratio of 1.5:1 while your game probably play at a ratio of 1.33:1, or 4:3. The correct resolution is 640x480.

Resizing for HQ/IQ full resolution:

The only time you should be resizing for HQ/IQ is if the aspect ratio is incorrect. People with NTSC DVD recorders will end up with video at 720x480 resolution, an aspect ratio of 1.5:1. This is a problem since the game you're recording probably plays at an aspect ratio of 1.33:1, or more commonly reffered to as 4:3. In this case you would resize the video to 640x480. PAL DVD video will come at 720x576 and needs to be resized to 704x576.

### NTSC lanczos4resize(640,480) ### PAL lanczos4resize(704,576)

Resizing for MQ/LQ low resolution:

This step is required to meet SDA standards.

### NTSC lanczos4resize(320,240) ### PAL lanczos4resize(352,288)

Be sure to read Part 8: Sharpening since resizing can possibly make the picture too blurry.

http://www.avisynth.org/Resize

http://www.avisynth.org/ReduceBy2

Part 8: Sharpening

When a picture or video has gone through significant resizing you usually end up with a blurry image. This is where sharpening comes in. Those who recorded their computer game with screen capture software are pretty much guaranteed a blurry image when resizing to the MQ resolution. Same with those who chose to deinterlace their video to the MQ resolution with the mvbob + resize method. Do not go overboard with the sharpening, play with the values until it looks right.

Since XSharpen works in the YV12 colorspace, you will have to convert it first.

ConvertToYV12() ### Defaults are 30,40 XSharpen(30,40)

Part 9: Cropping / Adding borders

You may be tempted to crop out the black border of console recorded footage. It's best to leave them in, otherwise you're going to make more work for yourself dealing with strange resolutions, encoders not accepting them, and possibly using the resize command later only to get an incorrect aspect ratio. Cropping should be used sparingly.

The following code corresponds to the illustration.

Crop(10,8,-14,-16)

You will probably not use the crop and add border commands. A good example of its combined use is if you want to get rid of some noise at the bottom of the video. You would crop it away, then add the border back. Only do this if the noise is along the black border of the video, since you don't want to crop away gameplay footage.

Crop(0,0,0,-10) Addborders(0,0,0,10)

Part 10: Color / Brightness

Be very careful when playing around with color and brightness. If your video is too bright and looks greyish it will be rejected. Feel free to ask others in the Tech Support forum for their opinions about your video.

http://www.avisynth.org/Levels

http://forum.doom9.org/showthread.php?t=93571

Part 11: SDA StatID

Tip: This should be the last thing you do in your script. Make sure the script works without the StatID before continuing.

SDA uses Station ID's to protect the runner's and the site's identities. StatID's are placed at the beginning of a video and shown for a few seconds. They are the next best thing to watermarks. Below is an example:

SDA realizes that those who encode their own runs and need manual timing can't show the time in the StatID, since final encodes are submitted to SDA and then timed. Until a solution is found, just make one without the time. A partial StatID is better than none.

You will need to install Avisynth 2.5.7 or later in order for the script to work. You also need to download the StatID header and place it in the same folder as your avisynth script, or make sure the paths are correct in the script.

The script is designed to work with any source file at any resolution and at any framerate. The only thing you need to change is the subtitle. The \n indicates a new line. Place the code at the end of your script.

###StatID version 1.5

mySubtitle="""Besmir ‘Zoid’ Sheqi\n\nMetroid Prime: Hard [1:15]\nPart 1"""

template=last

StatIDheader = (template.width < 640 ? ImageSource("statidheader.png").lanczos4resize(template.width,round(172.0*template.width/640.0)) : ImageSource("statidheader.png"))

FontSize = round(template.width * 0.05 + 4)

StatID = Blankclip(template,length=round(template.framerate*5))

StatID = Overlay(StatID,StatIDheader,x=(template.width-StatIDheader.width)/2,y=2)

StatID = Subtitle(StatID,mySubtitle,font="Verdana",size=FontSize,text_color=$FFFFFF,align=5,lsp=40)

#StatID = Subtitle(StatID,"Audio commentary on track 2",font="Verdana",size=round(FontSize*0.7),text_color=$E1CE8B,align=2)

StatID = StatID.ConvertToYV12()

StatID++last++StatID

Try to understand the concept behind the line "template=last" in the StatID. When creating the StatID from scratch, how do we know what resolution and framerate to use? We can't just put anything, otherwise we won't be able to join the StatID with the actual gameplay. One way is to manually input the exact values in the whole script. A better way is to make AviSynth look at what you've already set up (the gameplay), and extract the information needed. So, the "last" thing being worked on goes into a "template" and the script uses that.

Now that you understand the concept, note that you might not use "last" in "template=last". Same goes for the "last" in "StatID++last++StatID" (StatID is repeated at the end to try to fool pirates who cut off the StatID and steal the video). Take sample script #2 as an example.

Audio Commentary

If you are adding audio commentary to your run you may wish to add a note at the bottom of the StatID stating this. You will notice in the script that one line has been commented out with a single # sign. Removing it will enable the new subtitle.

Part 12: QuickTime compatibility

You wouldn't expect that something in AviSynth could cause problems with QuickTime; usually it's a result of incorrect encoding. Mp4 files store a value called 'timescale' that defines the rate at which the video should play. Sometimes AviSynth can cause the timescale to go as high as 10000000 instead of a more normal 2997 and breaks playback in QuickTime. Thankfully, there's an easy way to ensure that this won't happen. Add this piece of code at the end of your script.

changefps(last.framerate)

Sample scripts

1. DVD source, one segment, for HQ and IQ, deinterlacer: leakkerneldeint, gamma correction, no statid

Loadplugin("C:\Program Files\DGMPGDec\DGDecode.dll")

Ac3Source(MPEG2source("segment3.d2v",upConv=1),"segment3 192Kbps DELAY -66ms.ac3").DelayAudio(-0.066)

LeakKernelBob(order=1,threshold=10,sharp=true,twoway=true,map=false)

Trim(588,37648)

Lanczos4Resize(640,480)

Levels(0, 1.2, 255, 16, 235)

ConvertToYV12()

2. DVD source, two segments appended, for LQ and MQ DivX/Xvid, statid

Loadplugin("C:\Program Files\DGMPGDec\DGDecode.dll")

seg1 = Ac3Source(MPEG2source("segment1.d2v",upConv=1),"segment1 192Kbps DELAY -66ms.ac3").DelayAudio(-0.066)

seg1 = seg1.separatefields().selecteven()

seg1 = seg1.Trim(76,109763)

seg1 = seg1.lanczos4resize(320,240)

seg2 = Ac3Source(MPEG2source("segment2.d2v",upConv=1),"segment2 192Kbps DELAY -32ms.ac3").DelayAudio(-0.032)

seg2 = seg2.separatefields().selecteven()

seg2 = seg2.Trim(143,76875)

seg2 = seg2.lanczos4resize(320,240)

#StatID version 1.5

template=seg1

mySubtitle="""Besmir ‘Zoid’ Sheqi\n\nMetroid Prime: Hard [1:15]\nPart 1"""

StatIDheader = (template.width < 640 ? ImageSource("statidheader.png").lanczos4resize(template.width,round(172.0*template.width/640.0)) : ImageSource("statidheader.png"))

FontSize = round(template.width * 0.05 + 4)

StatID = Blankclip(template,length=round(template.framerate*5))

StatID = Overlay(StatID,StatIDheader,x=(template.width-StatIDheader.width)/2,y=2)

StatID = Subtitle(StatID,mySubtitle,font="Verdana",size=FontSize,text_color=$FFFFFF,align=5,lsp=40)

#StatID = Subtitle(StatID,"Audio commentary on track 2",font="Verdana",size=round(FontSize*0.7),text_color=$E1CE8B,align=2)

StatID = StatID.ConvertToYV12()

StatID++seg1++seg2++StatID

ConvertToYV12()

2a - Alternative code, same functionality. DVD source, two segments appended, for LQ and MQ DivX/Xvid, statid

Loadplugin("C:\Program Files\DGMPGDec\DGDecode.dll")

Ac3Source(MPEG2source("segment1.d2v",upConv=1),"segment1 192Kbps DELAY -66ms.ac3").DelayAudio(-0.066)

separatefields()

selecteven()

Trim(76,109763)

lanczos4resize(320,240)

seg1=last